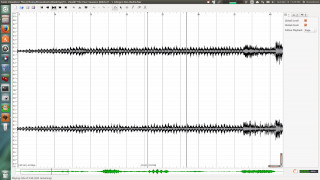

Performed by shifting the annotations – using the waveform as a guide – and listening back for perceptual accuracy. (besides those of the first two bars), and so the tap-editing operations are exclusively In this case, there are no duplicated or missing taps Tapper, in practice it is likely a a combination of human motor noise and jitter 1 in the acquisition of the keyboard taps. While this could simply be slopping timing on the part of the The timing of the taps is not super precise! As such many of the beats need to be altered to compensate for temporal imprecision. As becomes clear from watching the clip above, Having completed one real-time pass over the musical excerpt, the next stage is to goīack and listen again, but this time with the beat annotations rendered as audibleĬlicks of short duration. In this case, the tapper begins at the start of the 3rd bar, meaning the first two bars will need to be filled in later. Note, usually it’s not possible to start tapping straight away as it takes some timeįor a listener to infer the beat (even for familiar pieces of music). Listening to the excerpt and tapping along in real time to mark the beat locations.

#Sonic visualiser tutorial manual#

2 Manual annotation example in Sonic Visualiser. To begin with, we’ll focus on the fully manual approach.įig. Within the beat tracking literature and the creation of datasets,īoth approaches have been used. We can consider these approaches to be manual or semi-automatic. Or alternatively, by running an existing beat estimation algorithm, e.g., from madmom or librosa and then loading this annotation layer. This initial estimate could be obtained by hand, i.e., by tappingĪlong with the musical audio excerpt in a software such as Sonic Visualiser,

Typically involves an interative process departing from an initial estimate,Į.g., marking beat locations only, and then correcting timing errors, followed byĪ labelling process to mark the metrical position of each beat. The workflow by which beat and downbeat annotations can be obtained Locations from which either: i) a global value tempo for a roughlyĬonstant tempo piece of music or ii) a local tempo contour can be derived. The “data” in question refers to annotations of beat and downbeat

#Sonic visualiser tutorial how to#

How to acquire some data to learn from, and ultimately to evaluate upon. Of tempo, beats, and downbeats we explore in this tutorial, a critical aspect is Within the context of the kind of data-driven approaches for the estimation